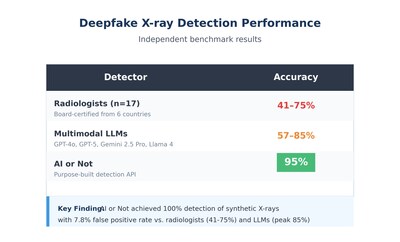

AI or Not Achieves 100% Detection of Deepfake X-Rays in Independent Test, With 95% Overall Accuracy, Exceeding Results Reported for Radiologists and Leading Multimodal LLMs

PR Newswire

SAN FRANCISCO, May 12, 2026

Benchmark using curated dataset from landmark Radiology study points to a path forward for protecting medical imaging integrity, insurance claims, and patient safety

SAN FRANCISCO, May 12, 2026 /PRNewswire/ — AI or Not, a leader in AI-generated content detection, today announced results from an independent benchmark conducted using the curated dataset made available from the recent Radiology study “The Rise of Deepfake Medical Imaging.” In a blinded test using the curated dataset of authentic and AI-generated radiographs, AI or Not’s detection technology achieved 95% overall accuracy while detecting 100% of synthetic X-rays, a performance that exceeded what was reported for both the board-certified radiologists and the leading multimodal large language models (LLMs) in the study.

The peer-reviewed study, published in Radiology in March 2026 by researchers at the Icahn School of Medicine at Mount Sinai, found that radiologists correctly identified only 41% of deepfake X-rays in an initial blinded review, rising to roughly 75% when told AI-generated images were present. Four leading multimodal LLMs, including GPT-4o, GPT-5, Gemini 2.5 Pro, and Llama 4 Maverick, ranged from 57% to 85% accuracy.

Benchmark Results

AI or Not ran the curated dataset made publicly available by the researchers through its AI detection software under blinded conditions. The dataset and its source images were confirmed not to be part of AI or Not’s model training data.

False positive rate: On authentic X-rays in the dataset, AI or Not correctly cleared 92.21% as real, with a 7.8% false positive rate. The detection platform missed 0% of the AI-generated images, achieving 100% detection of synthetic content.

The study’s authors warned that synthetic medical images create real-world risks across:

- Insurance fraud — fabricated imaging supporting false claims

- Legal evidence — tampered diagnoses introduced into litigation or disability cases

- Research integrity — synthetic images polluting training datasets and published studies

- Patient safety — manipulated scans influencing treatment decisions

Notably, the study found no correlation between a radiologist’s years of experience and their detection accuracy. Expertise alone is not a defense against this category of fraud.

“The Mount Sinai team showed just how vulnerable medical imaging is to generative AI, and we needed someone to put numbers on it,” said Anatoly Kvitnitsky, CEO & Founder of AI or Not. “They got the framing right, too. No single layer solves this. It takes clinician training, watermarking, dataset governance, and detection working together. Our benchmark shows that purpose-built detection can close the gap that human experts and general-purpose AI models cannot. We’re publishing these results to support the conversation, not to end it.”

Methodology

- Dataset: The curated dataset made publicly available by Tordjman et al. through the study repository (https://noneedanick.github.io/DeepFakeXRay/), comprising authentic and AI-generated radiographs across multiple anatomic regions.

- Generator tested: Images in the curated dataset were generated using GPT-4o (OpenAI).

- Conditions: Blinded — images from the dataset were uploaded to the AI or Not detection platform without prior knowledge of authentic vs. synthetic labels. Labels were used only post-hoc to score results.

- Training data confirmation: AI or Not engineering confirmed that the curated dataset and its source distributions were not present in model training data, ensuring an out-of-distribution evaluation.

- Full results: Per-image detection results for all radiographs in the test set, including detection scores and actual classifications (authentic vs. AI-generated), are available for download here.

Frequently Asked Questions

Can AI-generated medical images fool radiologists?

Yes. A March 2026 study published in Radiology by researchers at the Icahn School of Medicine at Mount Sinai found that board-certified radiologists correctly identified only 41% of AI-generated deepfake X-rays in an initial blinded review. Accuracy rose to roughly 75% when radiologists were warned that synthetic images might be present. Notably, the study found no correlation between a radiologist’s years of experience and detection accuracy.

Can large language models (LLMs) like GPT-5 or Gemini detect deepfake medical images?

Performance varies significantly by model. In the Mount Sinai study, four leading multimodal LLMs, including GPT-4o, GPT-5, Gemini 2.5 Pro, and Llama 4 Maverick, achieved 57% to 85% accuracy on the same dataset. General-purpose multimodal AI was unable to reliably distinguish authentic radiographs from synthetic ones.

How accurate is AI or Not at detecting deepfake X-rays?

In a blinded benchmark on the curated dataset provided by the Mount Sinai researchers, AI or Not’s detection platform achieved 95% overall accuracy, correctly identifying 92.2% of authentic radiographs as real and detecting 100% of AI-generated images as synthetic. The dataset and its source distributions were confirmed not to be present in AI or Not’s model training data.

What are the real-world risks of synthetic medical images?

The Mount Sinai study identified four primary risk categories: insurance fraud through fabricated imaging supporting false claims; legal evidence tampering in litigation or disability cases; research integrity issues from synthetic images polluting training datasets and published studies; and patient safety risks from manipulated scans influencing treatment decisions.

How are deepfake medical images created?

Synthetic radiographs can be generated using general-purpose multimodal models such as GPT-4o, as well as domain-specific medical image generators such as RoentGen. Both were used in the Mount Sinai study to create the deepfake images that radiologists and AI systems were tested against.

What can hospitals, insurers, and courts do to protect against medical deepfakes?

The Mount Sinai authors emphasize that no single defense is sufficient. Effective protection combines clinician training to recognize synthetic image artifacts, watermarking and provenance standards for medical imaging, dataset governance to prevent contamination of research and training data, and purpose-built detection technology. Each layer addresses gaps the others cannot.

Can the human eye spot AI-generated X-rays?

In most cases, no. Researchers note that synthetic medical images often appear “too perfect” with unusually smooth bone surfaces, excessive symmetry, uniform soft-tissue textures, and unnaturally clean fractures. Despite these recurring artifacts, the patterns are subtle enough that even experienced radiologists frequently miss them. Clinical training emphasizes pathology recognition, not detection of synthetic over-tidiness.

About AI or Not

AI or Not is the leading AI detection API for images, text, audio, video, and deepfakes. Powered by industry-leading models that deliver 98.9% accuracy, the API enables developers, businesses, and enterprises to embed synthetic media detection directly into their products and workflows. Trusted across a wide range of industries and use cases — from media and fintech to fraud prevention and identity verification — AI or Not is built for speed, precision, and scale. Learn more at aiornot.com.

![]() View original content to download multimedia:https://www.prnewswire.com/news-releases/ai-or-not-achieves-100-detection-of-deepfake-x-rays-in-independent-test-with-95-overall-accuracy-exceeding-results-reported-for-radiologists-and-leading-multimodal-llms-302768497.html

View original content to download multimedia:https://www.prnewswire.com/news-releases/ai-or-not-achieves-100-detection-of-deepfake-x-rays-in-independent-test-with-95-overall-accuracy-exceeding-results-reported-for-radiologists-and-leading-multimodal-llms-302768497.html

SOURCE AI or Not